- Published on

HireHut's Smart Interview IDE – The Future of Technical Hiring

- Authors

- Name

- HireHut

- @hirehut_org

We've all been through bad technical interviews.

The ones where you're coding in a Google Doc with no syntax highlighting. Or sharing your screen while three people watch you typo your way through a problem. Or worst of all, whiteboarding algorithms while someone times you with a stopwatch.

These interviews don't measure coding ability. They measure how well you perform under artificial pressure in an environment nothing like actual work.

As engineers who've been on both sides of technical interviews, we knew there had to be a better way. So we built one.

Why technical interviews are broken

The problem with most technical interviews isn't the questions - it's the environment.

Inconsistent setups: Some candidates code on their own machines with their favorite tools. Others use unfamiliar online editors. The environment becomes a variable that affects performance, making it impossible to compare candidates fairly.

No integrity verification: When candidates code at home, you can't be sure they're working alone. Help from friends, copying from Stack Overflow, using AI assistants - there's no way to know. You end up doubting good performances and questioning whether you're evaluating the person or their support system.

Subjective evaluation: Two interviewers watching the same coding session will come away with different impressions. One sees "methodical problem-solving" while another sees "too slow." One notices "clean code" while another misses subtle bugs. Without objective metrics, hiring becomes a gut-feel game.

Stressful conditions: Coding while being watched is nerve-wracking. Artificial time pressure, unfamiliar environments, and the weight of evaluation make people perform worse than they would in actual work. You're not seeing their best - you're seeing them stressed.

The result? Inconsistent evaluations, missed talent, bad hires, and a terrible candidate experience.

What we built instead

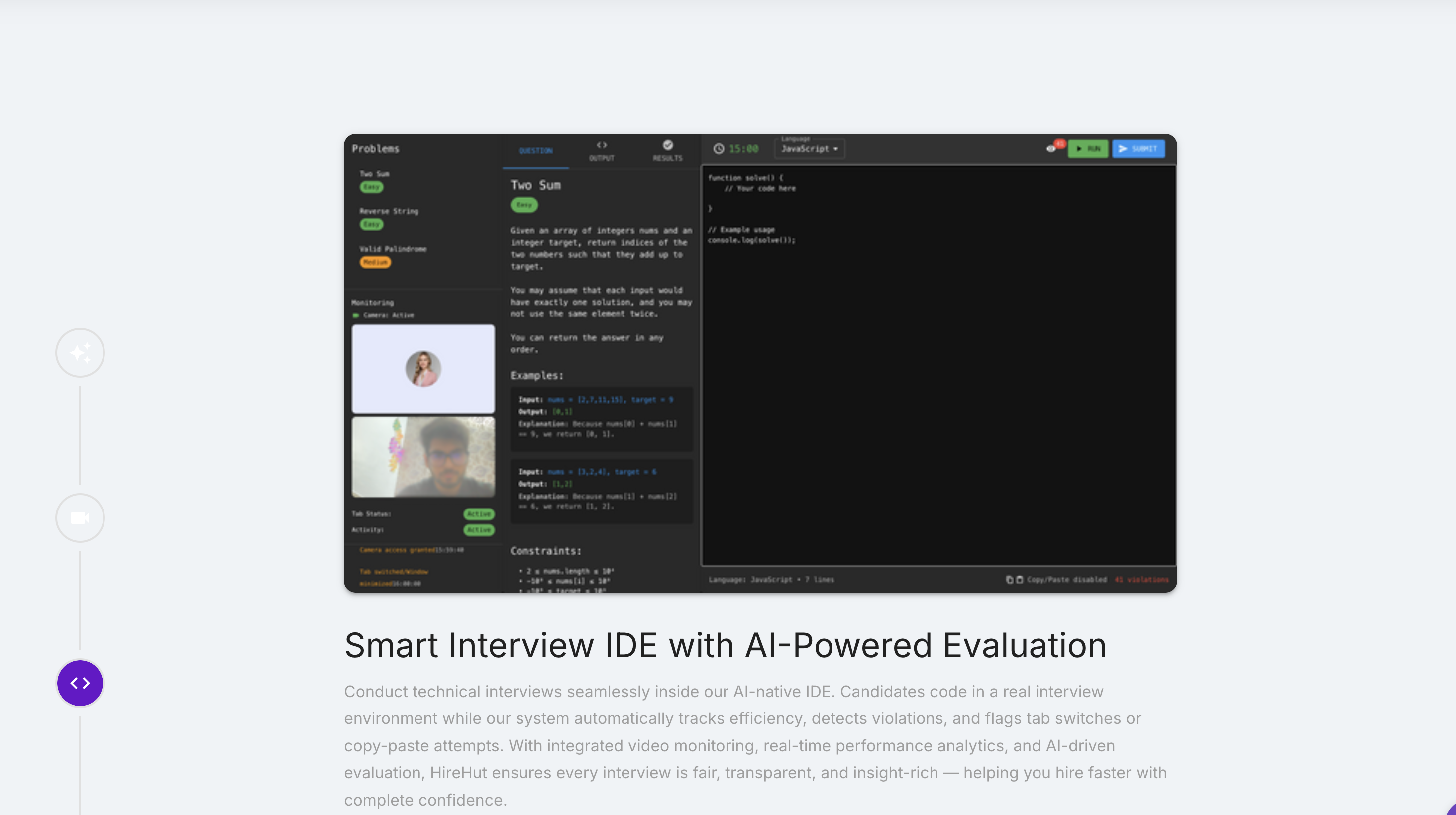

HireHut's Smart Interview IDE is a complete coding environment designed specifically for technical interviews. It's not a toy editor or a repurposed development tool - it's purpose-built for fair, comprehensive evaluation.

A real development environment

Candidates code in a fully-featured IDE that supports all major programming languages - Python, JavaScript, Java, C++, Go, Ruby, and more. Not stripped-down versions, not limited subsets - the real languages with full standard libraries.

Syntax highlighting: Proper color coding and formatting so code is readable.

Intelligent autocomplete: Context-aware suggestions that help with syntax without solving the problem.

Error detection: Real-time highlighting of syntax errors and common mistakes.

Debugging tools: Console output, error messages, and basic debugging capabilities.

Multiple file support: For problems that require organizing code across files.

It feels like coding in VS Code or their preferred editor, not fighting with an unfamiliar tool. This is critical - we want to evaluate coding skills, not ability to adapt to strange interfaces.

Comprehensive integrity monitoring

Here's where the Smart Interview IDE gets interesting. While candidates code, the system monitors everything to ensure fair evaluation.

Tab switching detection: The system tracks when candidates switch to other tabs or applications. An occasional reference to documentation is fine - we log it but don't penalize. But patterns of repeated switching to search engines or chat apps get flagged.

Copy-paste analysis: When code is pasted rather than typed, the system flags it. The AI analyzes whether it's pasting their own code from elsewhere in the file (fine) or pasting solutions from external sources (problem).

Typing pattern analysis: The system monitors coding rhythm and flow. Humans type with natural variation - bursts of typing, pauses to think, occasional backspacing. Code that appears in perfect blocks with no corrections or thinking time is suspicious.

Video monitoring: An integrated webcam feed captures candidates during the interview. Not to make them uncomfortable, but to provide context. Are they reading from another screen? Looking at notes on their desk? Or are they looking at the problem and thinking? The video adds crucial context to the code patterns.

External assistance detection: The AI looks for patterns that suggest external help - sudden changes in coding style, solutions that appear complete without iteration, or code that uses techniques not demonstrated elsewhere in the interview.

None of this is about catching people cheating. It's about confidence. When a candidate performs well and the integrity signals are clean, you can hire with complete confidence that you're getting what you evaluated.

Real-time AI evaluation

While candidates code, our AI is analyzing their work continuously.

Problem-solving approach: The system tracks how candidates break down problems. Do they start with a brute force solution and optimize? Do they plan first or dive into coding? Do they test edge cases? These patterns reveal how they think, not just what they know.

Code quality assessment: The AI evaluates style, readability, organization, and maintainability. Proper naming conventions, logical structure, appropriate comments - all the things that separate good code from functional code.

Efficiency analysis: We measure both execution efficiency (Big O complexity, memory usage) and coding efficiency (time to solution, lines of code, refactoring iterations). Some candidates write optimal algorithms slowly. Others write working code fast. You need both perspectives.

Error handling: How does the candidate handle bugs? Do they test their code? Do they catch edge cases? Do they validate inputs? The AI tracks all of this automatically.

Best practices adherence: The system checks for common best practices in the candidate's language - proper error handling, memory management, security considerations, and language-specific patterns.

All of this happens in real-time, building a comprehensive evaluation that's far more detailed than human observation alone could provide.

Post-interview analytics that tell the whole story

When the interview ends, you don't just get a "pass" or "fail." You get a complete analysis.

Performance timeline: See exactly what happened minute by minute. When did they start coding? When did they hit roadblocks? When did they have breakthroughs? The timeline shows the entire problem-solving journey.

Code quality scores: Detailed breakdown of code quality across multiple dimensions - correctness, efficiency, readability, maintainability, and best practices. Each scored and explained.

Skill assessment: Based on the code and approach, the AI identifies demonstrated skills. Data structure knowledge, algorithm design, debugging ability, optimization thinking - all mapped to skill levels.

Comparative benchmarks: How does this performance compare to other candidates for the same role? To successful hires in similar positions? To industry standards? Context matters.

Red and green flags: The system highlights both concerns (possible integrity issues, fundamental knowledge gaps) and strengths (elegant solutions, creative approaches, strong testing habits).

Video highlights: Key moments from the video feed synchronized with code events. See their reaction when they understood the problem, watch them work through a bug, observe their testing process.

AI-generated feedback: Constructive suggestions for what the candidate did well and where they could improve. Useful for rejected candidates who want to grow.

This isn't just data - it's a complete picture that makes the hiring decision obvious.

What this means for technical hiring

For recruiters and hiring managers

Confident decisions: You're not guessing whether someone can code. You have comprehensive data showing exactly what they can do.

Consistent evaluation: Every candidate gets the same environment, same monitoring, same evaluation criteria. You're comparing apples to apples.

Faster process: No more scheduling in-person coding sessions or waiting for take-home assignments. Candidates complete interviews on their schedule, and you get instant results.

Defensible hiring: When you make an offer or rejection, you can explain exactly why with concrete data. No more uncomfortable "we went with someone else" conversations where you can't articulate the difference.

Reduced bias: The AI evaluates code, not demographic factors. It doesn't care about accent, appearance, or school name. Just: can they code well?

For candidates

Better experience: Code in a real environment with proper tools, not a stressful whiteboard session or awkward screen share.

Fair evaluation: Everyone gets the same setup, same questions, same criteria. Performance matters, not comfort with unusual situations.

Clear feedback: Even if they don't get the job, they understand why and what to work on. That's rare and valuable.

Flexibility: Complete the interview when it works for their schedule, not when they can take time off their current job.

Transparency: They know they're being evaluated objectively. The best performers want fair assessment.

Real scenarios where this makes a difference

Scenario 1: The false negative

A candidate does poorly in a traditional whiteboard interview. Nervous, making simple mistakes, going blank under pressure. You reject them.

Later, you find out they're a senior engineer at a top company. The interview environment sabotaged their performance.

With HireHut's IDE, they code in a comfortable environment, the AI sees their systematic approach despite initial nervousness, and the data shows strong underlying skills. You make the right hire.

Scenario 2: The false positive

A candidate breezes through your take-home coding challenge. Perfect code, optimal algorithms, impressive. You're excited to hire them.

Three months later, you discover they're struggling with basic tasks. Turns out they had significant help with the interview.

With HireHut's integrity monitoring, you would have seen the warning signs - unnatural typing patterns, code appearing in perfect blocks, style inconsistencies. You would have investigated further before making the offer.

Scenario 3: The comparison problem

You interview five candidates. Some did whiteboard sessions, some did take-homes, one did a live coding session. They all seemed okay, but how do you compare them? Different problems, different environments, different evaluation styles.

You make your best guess based on interviewer notes. It doesn't work out.

With HireHut, all five candidates complete the same problems in the same environment with the same evaluation. The data shows clear performance differences. You hire the right person.

These scenarios play out constantly in technical hiring. The Smart Interview IDE prevents them.

The technical foundation

Building this required solving some genuinely hard problems.

Sandboxed execution environment: Code runs securely isolated so candidates can't access external resources or cause damage. Full language support while maintaining security.

Real-time analysis pipeline: AI evaluation running concurrently with coding without interfering or causing lag. Candidates code smoothly while the system analyzes in the background.

Behavioral pattern detection: Machine learning models trained on thousands of coding sessions to identify natural coding patterns versus suspicious behaviors.

Multi-modal analysis: Combining code analysis, typing patterns, video feeds, and timing data into cohesive evaluation. Each data stream provides context for the others.

Language-agnostic evaluation: Code quality assessment that works across different languages with different idioms and best practices.

This isn't just an editor with some monitoring tacked on. It's a sophisticated system purpose-built for technical evaluation.

How it compares to alternatives

vs. Whiteboard interviews: Actually evaluates coding ability instead of whiteboard performance. Objective data instead of subjective impressions.

vs. Take-home assignments: Fair time limits and integrity monitoring instead of unknown working conditions. Candidates can't spend all weekend or get unlimited help.

vs. Generic online editors: Full IDE experience instead of bare-bones text boxes. Professional evaluation instead of just checking if code runs.

vs. In-person coding: Flexible scheduling and consistent environment instead of logistics nightmares. Complete data capture instead of interviewer notes.

The Smart Interview IDE takes the best aspects of each approach while eliminating the weaknesses.

Getting started with better technical hiring

If you're still using whiteboard interviews or unmonitored take-homes, you're making hiring decisions with incomplete information. Some great candidates look bad because of the environment. Some poor candidates look good because they had help. You'll never know which is which.

The Smart Interview IDE gives you confidence. Not because it's AI-powered - because it provides comprehensive, objective data about what candidates can actually do.

Visit hirehut.org to see the IDE in action or schedule a demo where we'll walk through a real technical interview evaluation.

Technical hiring is hard enough. Your interview tools shouldn't make it harder.

Evaluate skills, not stress management. That's what coding interviews should be.